AI in medical devices and drug discovery often appears daunting: algorithms that learn, evolving data pipelines, and a dense layer of European regulations. Yet the path forward is increasingly evident. The Medical Device Regulation (MDR), the General Data Protection Regulation (GDPR), and the forthcoming EU AI Act now provide a shared framework. Additional guidance from the Medical Device Coordination Group (MDCG), Team-NB, and the European Medicines Agency (EMA) further clarifies expectations for AI-driven software in medicines and drug lifecycle management.

The good news is that this framework is no longer abstract. Key guidance documents are available, and regulators have set clear expectations around clinical evidence, lifecycle management, and data protection. The challenge for medical device manufacturers is less about identifying which rules apply and more about embedding them at the right points in the product lifecycle—considering, for example, the varying application dates of regulations like the AI Act, so that compliance, safety, and performance advance hand in hand.

1. The Integrated Regulatory Framework

In Europe, three pillars define the compliance landscape for AI-enabled medical devices:

- MDR ensures products are safe and clinically effective.

- GDPR protects personal and health data processed by devices.

- AI Act (in its final legislative stages) introduces a risk-based framework tailored for AI, emphasizing data quality, robustness, and transparency.

These regulations overlap. For example, MDR already requires post-market surveillance, and the AI Act reinforces this with ongoing monitoring of model performance and drift. GDPR’s principle of data minimization ties directly into the AI Act’s requirement for high-quality, representative training datasets. Manufacturers must also ensure that cross-border flows of sensitive health data comply with GDPR adequacy rules or safeguards and that system architecture respects local hosting or residency requirements where applicable.

Global standards are aligning as well. The International Medical Device Regulators Forum (IMDRF) promotes Good Machine Learning Practice, providing a common baseline for documentation of data pipelines, reproducibility of experiments, and explainability features. Multiple regulators in the United States (including the FDA — Food and Drug Administration), Japan, and Australia are adopting similar expectations, aligning closely with EU standards.

The result is a system-of-systems approach, where MDR, GDPR, and the AI Act cannot be addressed separately. Instead, manufacturers must design a unified lifecycle process covering risk management, data governance, clinical evidence generation, and transparency obligations across every lifecycle stage. In practice, a single quality and documentation framework within the Quality Management System (QMS), ideally structured as part of an Integrated Management System (IMS), should demonstrate how requirements from MDR, GDPR, and the AI Act are embedded at the right stages of product development — from early design and dataset curation to post-market monitoring and algorithm retraining. Success depends on cross‑functional cooperation between R&D, Quality, Regulatory, Clinical, IT Security, and Data Protection teams.

2. Thinking in Product Lifecycle Stages

A lifecycle-based approach helps reduce redundancy and ensures the evidence is collected correctly. Rather than addressing regulations line by line, these are mapped onto each stage of development. While the principle applies across the entire product lifecycle, this article focuses on the specific and additional elements to AI-enabled medical devices, with the general MDR obligations remaining in place.

Let’s take a closer look at how the lifecycle principle applies across all stages of medical device development:

Stage 1: Concept and Development

The focus is on defining purpose, setting boundaries, and identifying concrete risks with corresponding risk controls. The primary regulatory considerations specific to AI medical devices at this point include:

- Data Governance (GDPR + AI Act): Data used for training and validation must be legally sourced, de-identified where possible, and representative of the intended patient population. Dataset provenance, bias mitigation strategies, and data quality controls must be documented, ensuring that training, validation, and testing datasets remain fit for purpose throughout the device lifecycle.

- Dataset Documentation: Maintaining detailed records of dataset versions, labeling processes, data selection criteria, and data augmentation methods to ensure reproducibility, auditability, and transparency of how training and validation data were chosen and applied.

- Risk Management (ISO 14971, MDCG guidance): Addressing AI-related hazards, such as algorithmic bias, dataset shift, or cybersecurity threats, and linking them to risk controls. Examples include defining thresholds for acceptable false negatives in diagnostic AI, setting monitoring triggers for data drift, or implementing intrusion detection systems to mitigate adversarial attacks.

- Transparency and Instructions (AI Act): Clear user guidance on AI functionality, its limitations, and how to interpret its output. These are real use‑case driven requirements under the AI Act, which obligates manufacturers to provide mechanisms for human oversight, including the ability to pause or stop the AI algorithm at any time if results appear unreliable or unsafe. In addition, manufacturers must prepare supporting documentation that records how transparency obligations are implemented—such as user manuals, model interpretability reports, and logs of algorithm decisions—so that auditors and regulators can verify compliance.

- Continuous Learning Controls: Defining whether the AI is locked or adaptive, documenting retraining triggers, and governance. This expectation is not yet a formal EU legal requirement under MDR, but it is highlighted in the draft EU AI Act for high‑risk AI systems and in international guidance (e.g., FDA good practices). Manufacturers should treat it as an emerging best practice aligned with upcoming obligations.

Functional requirements at this stage include interpretability features (e.g., heatmaps for imaging AI), fail‑safe modes when the algorithm cannot provide reliable results, auditability of AI decisions, and built-in security measures. These measures complement earlier transparency and human oversight obligations, ensuring AI systems remain explainable, resilient, and safe.

Stage 2: Validation and Pre-Market Assessment

This stage is about showing, with test reports and real-world evidence, that the system performs safely and consistently. Everything regulators (and clinicians) will look at comes from here: verification testing in controlled conditions and clinical evaluation. Together, these results form the core of the submission file that demonstrates readiness for the market.

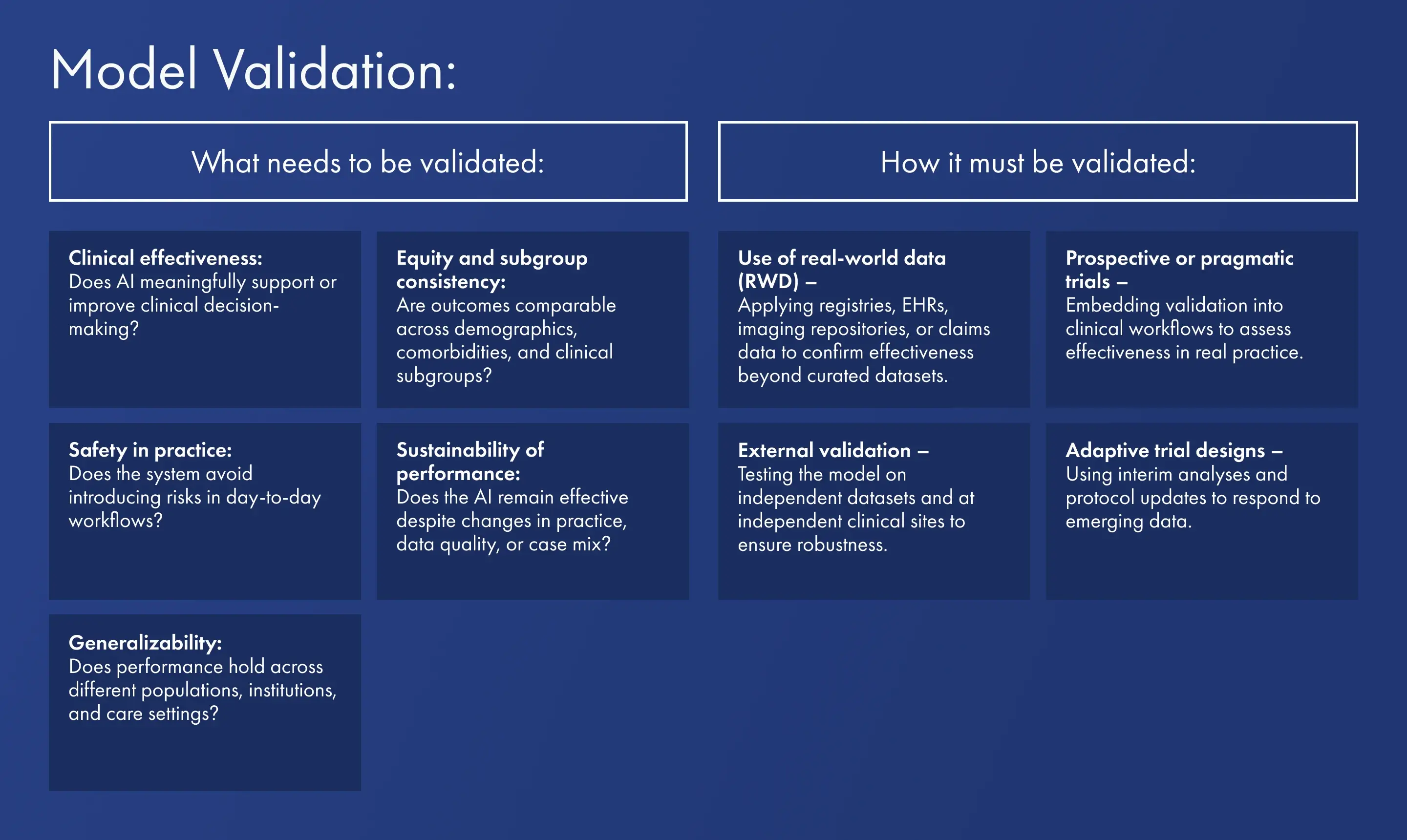

Model Validation: Evidence that the AI works in real-world settings, which may involve real-world data or adaptive trial designs. Model validation goes beyond controlled verification and focuses on demonstrating clinical effectiveness and reliability in real-world use. Regulators expect evidence that the AI performs safely and consistently when exposed to the variability of clinical practice, patient populations, and healthcare environments.

Stage 3: Market Entry

At the market entry level, the conformity assessment (with a Notified Body for higher-class devices) verifies compliance with all applicable requirements. Currently, only MDR certification through a Notified Body is legally binding in the EU for medical devices (of certain risk classes). The AI Act will introduce conformity assessments in the future, but will not create a separate pathway. Under MDCG 2025-6 guidance, AI systems that are part of medical devices will continue to be certified through MDR processes; its requirements are expected to be incorporated into the MDR conformity assessment handled by Notified Bodies, with MDR remaining the overarching regulation for medical devices. It is important to note that while MDCG guidance is interpretative and not legally binding, it provides insight into how EU regulators currently expect the interplay between MDR and the AI Act to function.

GDPR, while not offering certification, must still be integrated into the IMS. Nevertheless, manufacturers are expected to follow these requirements, as regulators already use market surveillance and enforcement tools to monitor and control compliance. At this stage, the Integrated Management System (based on the above) becomes central: it must cover manufacturing, data lifecycle management, algorithm change control, and monitoring procedures.

Stage 4: Post-Market Surveillance and Lifecycle Management

AI-driven medical devices cannot be static. MDR and AI Act demand ongoing monitoring of aspects specific to AI, such as continuous bias and drift detection, transparency of evolving models, and the need for defined protocols on algorithm retraining and updates.

Bias and drift monitoring means more than routine accuracy checks. Performance drift occurs when accuracy, sensitivity, or other critical metrics decline over time due to shifts in patient populations, evolving clinical practices, or changes in data quality. If left unchecked, such drift can undermine device reliability and lead to biased outcomes, misdiagnoses, or unsafe recommendations. Effective monitoring involves periodic re-testing on fresh datasets that reflect current practice, statistical monitoring of performance metrics over time, and comparison against pre-established alert thresholds. Monitoring must be stratified by demographics, comorbidities, and geographies to uncover subgroup bias that may not appear in aggregate results. Methods such as population stability indices, continuous calibration checks, or control charts for performance metrics can be applied to detect early signs of drift.

Change management protocols are equally critical in governing how AI systems are retrained and updated. In line with MDR and AI Act requirements, updates should be classified as routine maintenance (e.g., bug fixes or non-substantive changes), controlled retraining (requiring documented verification and validation but not full re-certification), or substantial modifications (which trigger a new conformity assessment). To ensure transparency and auditability, this governance must also define criteria such as shifts in key performance indicators, introduction of new data modalities, or architectural model changes.

Each retraining cycle should follow documented procedures for dataset selection, preprocessing traceability, verification of new metrics, and comparison against prior baselines. Without such controls, adaptive algorithms risk drifting beyond their certified use case, compromising regulatory approval status and patient safety.

Shaping a Trustworthy Future

The regulatory bar for AI in medical devices is high but not arbitrary. It reflects three imperatives: patient safety, data protection, and algorithmic accountability. For manufacturers, success means building a regulatory strategy aligned with the product lifecycle rather than treating MDR, GDPR, and AI Act as separate checklists.

What once felt like uncharted territory is now supported by a growing body of guidance: MDCG clarifications on software, Team-NB positions on AI transparency, and EMA principles on Good Machine Learning Practice (for AI used in the lifecycle of medicines).

AI-enabled medical devices may be complex, but they are innovative, less invasive, and designed to deliver better decision support and improved patient outcomes. By integrating regulatory, clinical, and technical requirements into one lifecycle approach, manufacturers can meet regulatory requirements and bring trustworthy, high-performing technologies to the patients who need them most.

ORCID iD: 0009-0001-6250-5659

References

- Regulation (EU) 2017/745 on Medical Devices (MDR)

- Regulation (EU) 2016/679 on General Data Protection (GDPR)

- Proposal for a Regulation laying down harmonised rules on Artificial Intelligence (AI Act, expected 2025/2026)

- MDCG 2019-16: Guidance on Cybersecurity for medical devices

- Team-NB Position Papers on Artificial Intelligence in Medical Devices

- EMA Reflection Paper on the use of Artificial Intelligence in the lifecycle of medicines (including Good Machine Learning Practice)