Real-world data is the key to validation of AI-driven software as it was emphasized by regulators like the FDA and the European Commission. DataArt recently hosted a webinar to explore how Real-World Data (RWD) ensures AI models are safe, effective, and ready for real-world use. The panel of experts included:

- Sara Jaworska (Juszczyk), Quality and Regulatory Affairs Manager, DataArt

- Andrey Sorokin, AI Expert and Solutions Architect, DataArt

- Varvara Bogdanova, Innovations Manager, Healthcare and Life Science, DataArt

Our experts discussed RWD management, regulatory insights, and post-market surveillance. In this article, we summarized the key insights from their discussion.

Why RWE is Critical for AI in Digital Health

Varvara Bogdanova: Traditional clinical trials often have a limited scope and may not accurately represent the real-world patient population. How does real-world data address this issue?

Andrey Sorokin: Real-world data, gathered from routine clinical practice rather than controlled experiments, encompasses a broader range of patient demographics, healthcare settings, and geographic areas. This data, collected during and after medical device development, can help predict anomalies, adjust algorithms, and refine AI solutions. Ultimately, it enhances reliability and improves patient safety.

V. B.: Are there any risks associated with real-world data? How can we ensure AI remains safe?

Sara Jaworska: AI models are only as good as the data they are trained on, especially in healthcare. Bias is a significant risk; if an AI model is trained on data that lacks diversity, it can produce results that are inaccurate or even harmful for the underrepresented groups. Moreover, AI-models are dynamic and must evolve alongside clinical practices and patient populations. Without continuous monitoring, their performance can deteriorate over time.

Validation of AI Models with RWD

V. B.: What are the key challenges in validating AI models in medical devices using real-world data?

A. S.: First of all, it's important to understand the specifics of ground truth data. In computer vision, for example, training datasets for recognizing handwritten digits is straightforward: each image corresponds to a specific label. However, in clinical practice, the situation is much more complex.

When it comes to medical datasets for machine learning, we often deal with differential diagnoses, as doctors may classify the same specimen differently. This variability introduces significant technical challenges, so we need to provide data, such as DICOM images, to physicians for annotation.

When working with geographically distributed patient cohorts, we also face complexities related to cross-border data transfer, which adds another layer of difficulty in data management, ownership, and governance.

V. B.: And what is the challenge from a regulatory standpoint? How do we ensure personal data is protected during data transfers?

S. J.: The GDPR in the EU and the FDA in the US have different definitions of anonymized data, which makes compliance tricky in these markets. The best approach, in my opinion, is to plan from the start where the AI model will be used and base data governance on the strictest requirements. This strategy helps maintain compliance across multiple regions without the need for separate policies, which can complicate operations and increase the risk of errors.

Another challenge is cross-border data transfer, which adds another level of complexity. Regulations often restrict how personal data can be shared across borders. Conducting assessments to identify where data can be transferred and determining any additional controls needed can help address these concerns.

Finally, privacy and security must be integrated into AI development from the beginning. This includes minimizing data collection, documenting legitimate data usage, and limiting access rights. Companies that adopt a privacy-first approach now will be better prepared for future regulatory developments.

Regulatory Perspective

V. B.: What is the approach of the regulatory bodies, such as the FDA and the European Commission, towards AI validation in medical devices?

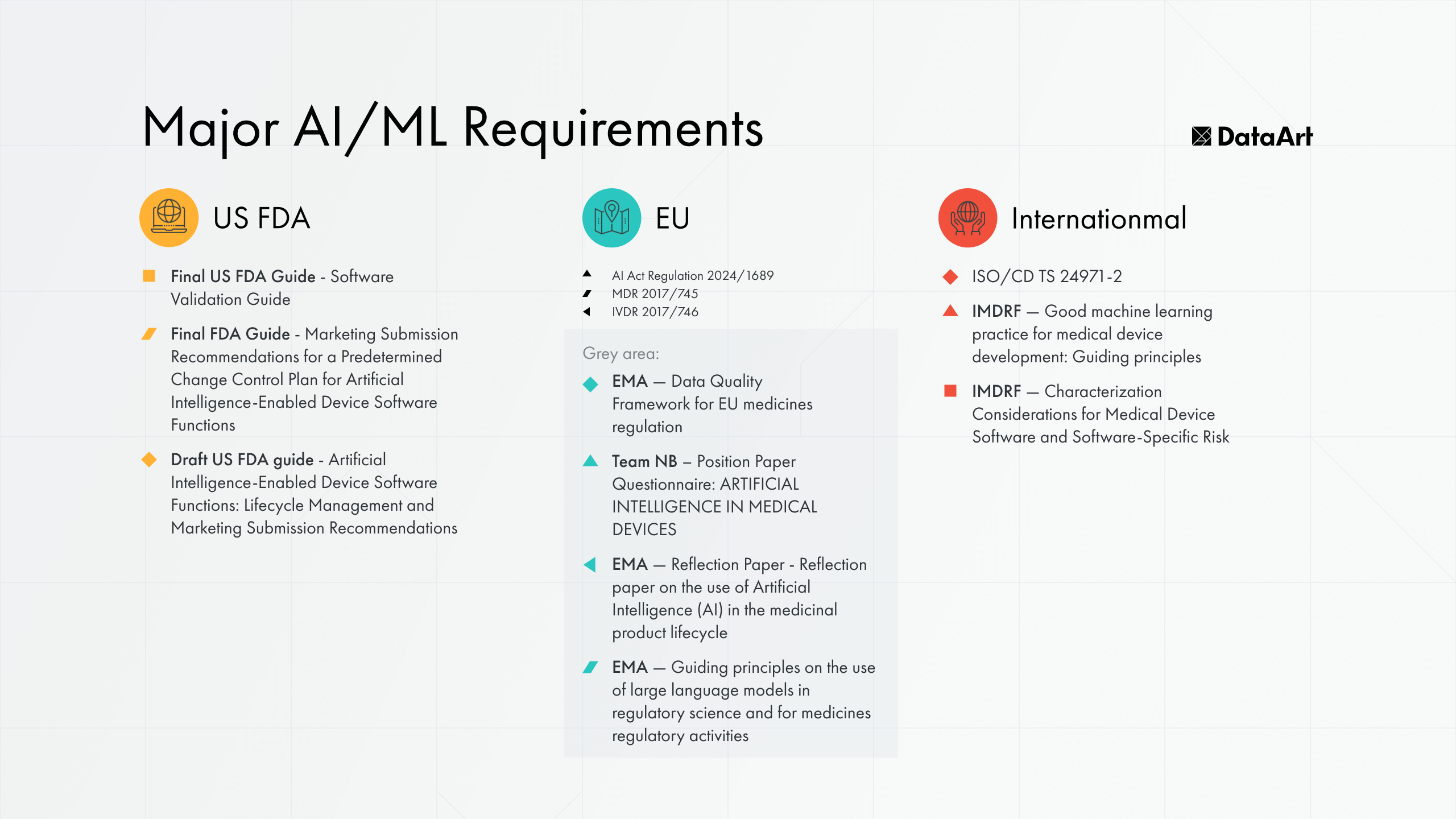

S. J.: The regulatory landscape for AI-based medical software is evolving rapidly. We’ve seen new regulations come into effect, with more still in the pipeline. Interestingly, organizations like the European Medicines Agency have added their perspective on how AI models should be developed and controlled in the context of drug discovery and development. On top of that, new AI-specific ISO standards are currently in development, and we already have the new IMDRF guideline on Good Machine Learning Practices (GMLP). This evolving framework allows for various sources of requirements, but it's crucial to ensure they are not contradictory and suit our specific needs.

V. B.: Given the numerous regulations, how can organizations successfully comply?

S. J.: When it comes to medical devices, organizations must consider two main aspects: product requirements and systemic requirements. For product requirements, the starting point should be drafting the intended use of the medical device. This defines what needs to be validated, the reliability required, and the clinical context of the application. Once this is established, companies can structure their verification, retention, and risk management plans.

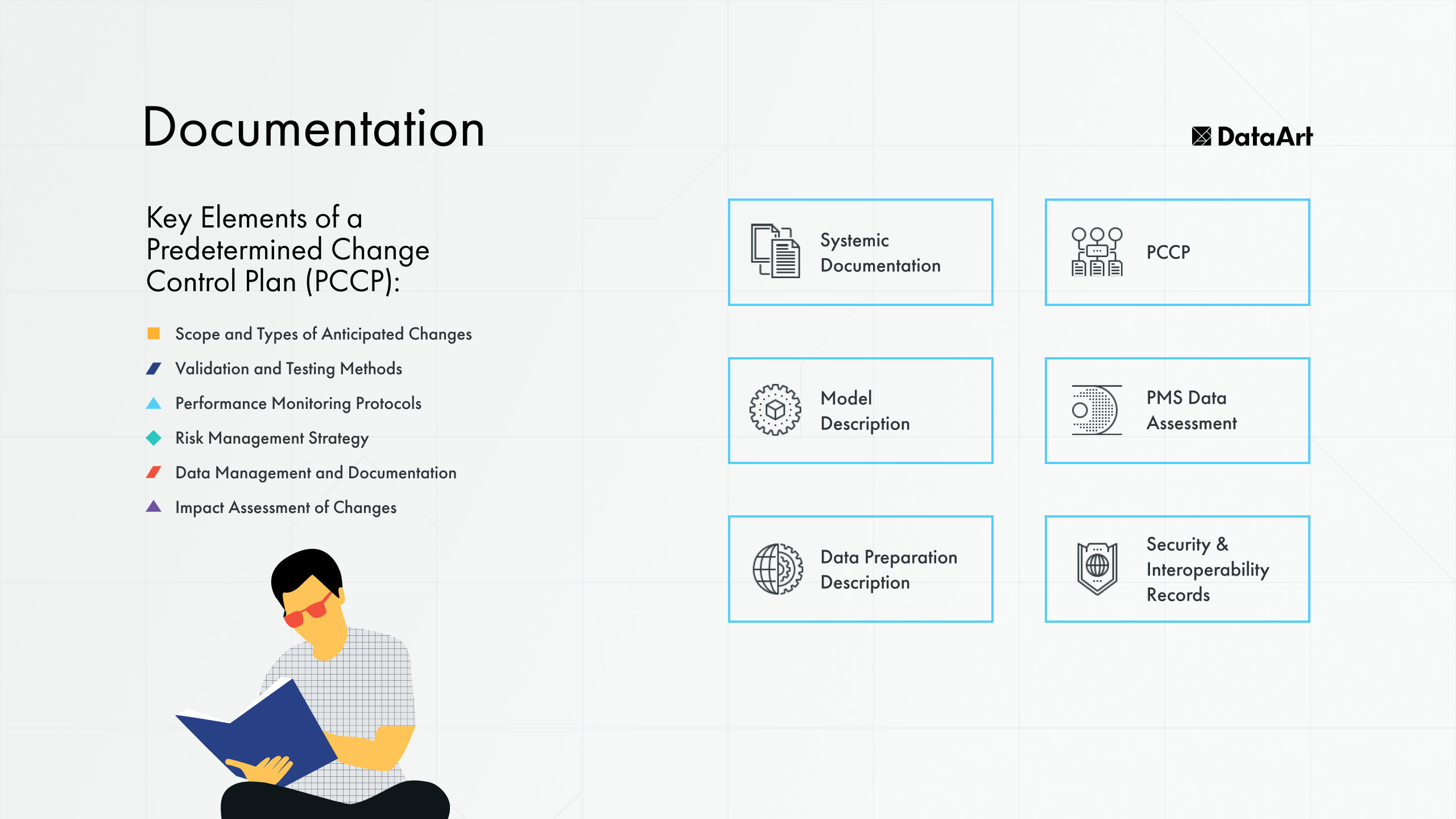

An important requirement related to product documentation is the predetermined change control plan. It documents the frames in which the model can operate and change and includes impact assessment, outline of risks and how changes will be validated.

For systemic requirements, compliance with regular quality standards, such as ISO 13485 and risk management protocols, is essential. Organizations should also implement robust security measures and adhere to good machine learning practices, which can be integrated into internal systems or required from vendors. One of the most significant rules here is human oversight. For example, the AI Act requires the functionality of a “stop button”, which means humans must be able to shut the system down fairly easily.

Post-Market Surveillance and Monitoring

V. B.: Once an AI-driven medical device is on the market, how can we monitor and improve it?

A. S.: It is an ongoing process, as we typically discuss dynamic machine learning systems. As Sara mentioned, this evolution is guided by a predetermined change control plan, which outlines how an AI model can be refined without requiring repeated submissions for certification.

However, there are three key scenarios that necessitate resubmission. First, if new data sources or modalities are introduced, such as switching from X-ray images to MRI scans. Second, significant changes to the algorithm, like moving from a convolutional neural network to visual transformers, would also require recertification. Third, if new data from clinical trials reveals safety or performance concerns, the device will need to be reworked.

Even the most rigorously tested models can encounter unexpected edge cases in real-world settings. Therefore, it's essential to establish processes for collecting and analyzing these anomalies early on, even before the product is launched. This includes tracking model drift and gathering feedback from real users.

V. B.: From a regulatory standpoint, how can companies ensure that AI updates don’t violate the product’s initial certification?

S. J.: Companies must utilize a predetermined change control plan that outlines acceptable and unacceptable changes. If the model undergoes modifications not covered by the plan, a new regulatory submission may be required.

From a regulatory perspective, the significance of a change can be assessed through risk management. To effectively monitor model shifts, manufacturers need a proactive post-market surveillance process.

While the EMA and FDA have different requirements for medical devices, they share common elements such as continuous monitoring, risk detection, and real-time data analysis. Manufacturers must integrate these elements into their regulatory quality strategy and processes to ensure ongoing performance assessment and risk management.

Conclusion

Real-world data enhances AI models' reliability and helps address traditional clinical trials' limitations, ensuring that diverse patient populations are accurately represented. To navigate the complexities of real-world data validation of AI software, companies should focus on risk management, data governance, and compliance with varying regulatory frameworks. Businesses that invest in strong compliance frameworks and prioritize data quality will be best positioned for success.

For more insights on real-world data validation of AI-powered medical devices, watch the full webinar here.

Contact us if you need help from experts to guide you through real-world data validation of AI-based medical software.