Get in touch with us today to modernize your business with the latest technologies, slashing operating costs and outperforming your competitors. Just fill out this form, and we’ll get back to you as soon as possible.

As enterprises move beyond isolated GenAI pilots and begin operationalizing LLMs at scale, many run into the same critical roadblocks: fragmented access, poor visibility, mounting costs, and governance risks.

At DataArt, we encountered these challenges early, both in our internal use of LLMs and when supporting enterprise clients. We realized that without a centralized control layer, deploying SaaS-based LLM APIs like OpenAI, Azure OpenAI, and Google Gemini across teams quickly became chaotic, insecure, and cost-inefficient.

This case study shares how we built an enterprise-grade LLM infrastructure that balances flexibility with control, experimentation with governance, and innovation with compliance.

Challenge: Moving Beyond Fragmented and Ungoverned LLM Adoption

While SaaS-based LLMs (e.g., OpenAI, Azure OpenAI, Claude, Gemini) make it easy to experiment, they quickly pose scaling issues for growing teams:

- No centralized access control: Provisioning or revoking API access across departments is inconsistent and risky.

- Lack of observability: Token usage per user or application cannot be easily tracked or allocated.

- Unpredictable costs: Engineering teams consume tokens rapidly, often without budget visibility or controls.

- High vendor dependency risk: Outages or policy changes from single providers can disrupt operations.

- Weak governance: Enterprise security, compliance, and regional data policies are difficult to enforce.

- Scattered experimentation: There’s no unified, governed environment to prototype and scale LLM applications.

Solution: Unified AI Platform for Enterprise-Grade LLM Management

We developed a robust internal solution, called DataArt AI Platform, a secure, scalable infrastructure layer designed to manage LLM API access across the organization.

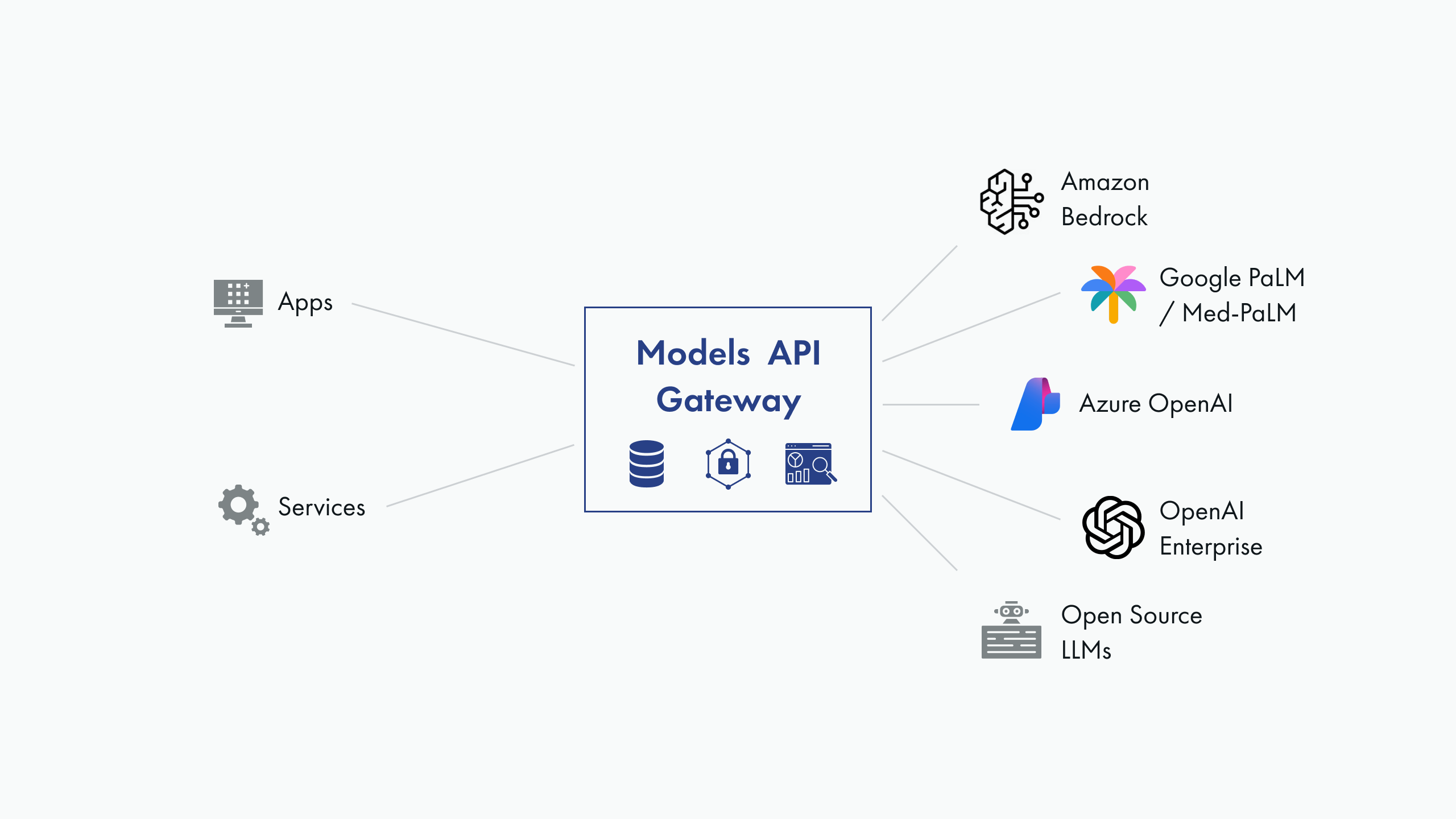

The platform's cornerstone is a “gateway” – a key component that proxies access to the actual provider APIs. It performs several vital functions:

- Conceals actual API keys from end-users.

- Issues its own API keys, offering us complete control.

- Integrates OAuth2 authentication with our Azure infrastructure.

- Accounts for requests on both a per-key and per-user basis.

- Sets access quotas for individual users or groups.

- Collects access statistics.

Originally, it was implemented with a combination of a reverse proxy nginx with Lua plugins and a custom Python/FastAPI service that counts requests, sets limits, and collects usage data.

For now, it supports the proxying of OpenAI and Azure OpenAI APIs, with plans to incorporate Amazon Bedrock/Claudie, Google Gemini, and prominent open-source model deployments.

Another pivotal platform component is the LLM apps marketplace (which we call the GenAI portal). It grants centralized access to deployments of LLM applications, such as:

- Our in-house ChatGPT-like web application features a shared company-wide and department-specific prompt library and an advanced prompting mode similar to OpenAI's playground for prompt engineering, experimentation, and application development.

- A speech-to-text tool based on the open-source Whisper model is utilized for audio and video transcriptions.

- Prototype applications and proofs of concept for various domains, which serve as starter kits and help in marketing our services to prospective clients.

Results: From The Key to Governed AI Adoption

Since deploying the AI Platform internally, we've seen measurable improvements in productivity, cost efficiency, and governance maturity across our GenAI initiatives:

- 30% reduction in LLM API spend, driven by usage quotas, rate limits, and centralized monitoring.

- Full auditability of all prompt and token usage across users, teams, and applications.

- Elimination of shadow API usage, with API keys fully obfuscated and securely managed.

- Accelerated time-to-deployment for internal GenAI apps, reducing prototyping cycles by 40%.

- Multi-provider failover readiness, enabling business continuity during provider outages or policy changes.

- Improved compliance posture, with enterprise-grade access controls and centralized logging.

Beyond operational benefits, the platform has enabled us to standardize and scale GenAI use cases, from productivity boosters to client-facing prototypes, without losing control.

This isn’t just a technical solution. It’s a foundational layer that lets us and now our clients treat LLMs not as ad hoc tools, but as strategic infrastructure.