Each API key represents a door to new possibilities and, at the same time, a resource that requires meticulous oversight. Monitoring the usage of these keys and the LLM tokens they consume becomes a vital part of financial planning and operational efficiency in AI integration and enterprise AI solutions.

The challenge intensifies when integrating not one but multiple AI and SaaS providers into an organization’s ecosystem. To thrive in this environment, organizations need a seamless, secure, and compliant framework that supports various technologies while ensuring business continuity through redundancy plans. It also requires a platform capable of providing insightful analytics on API use, guiding decision-makers in resource allocation and strategy adjustment.

Creating an environment that encourages LLM application exploration while managing API traffic flow requires a delicate balance between governance and creativity. This balance is crucial for fostering an atmosphere where experimentation is not only possible but thrives within the bounds of organizational objectives and compliance requirements, all under a cohesive AI implementation strategy.

Recognizing these challenges early on, our DataArt AI Lab developed and deployed a AI platform that not only overcame these obstacles but also paved the way for other businesses to follow. This guide explores the common problems associated with SaaS LLM APIs and how DataArt's approach provides a blueprint for success in AI integration.

The Main Challenges of SaaS LLM APIs

When developing LLM applications for organizational use, the typical route is to leverage SaaS models provided through web APIs — like those from OpenAI, Azure OpenAI, Claudie, or Google PaLM (Gemini). However, it soon becomes clear that API access is a valuable and limited resource. Some common challenges you may encounter during AI integration include:

- Distributing a limited set of API keys among teams and individual engineers, with the option to revoke access if necessary.

- Monitoring the number of LLM tokens each team consumes, for example, to allocate budgets for API provider payments.

- Setting access quotas and priorities for each team or application.

- Gathering API usage statistics for budget planning and gaining insight into team requirements.

- Overseeing API traffic to ensure compliance purposes.

- Providing centralized access to a diverse range of LLM providers and open-source models, facilitating innovation and experimentation.

- Ensuring redundancy to mitigate potential outages in specific regions and data centers.

- Establishing an enterprise-wide "marketplace" for LLM applications.

Decoding DataArt’s AI Platform: A Blueprint for AI Integration and Enterprise AI Solutions

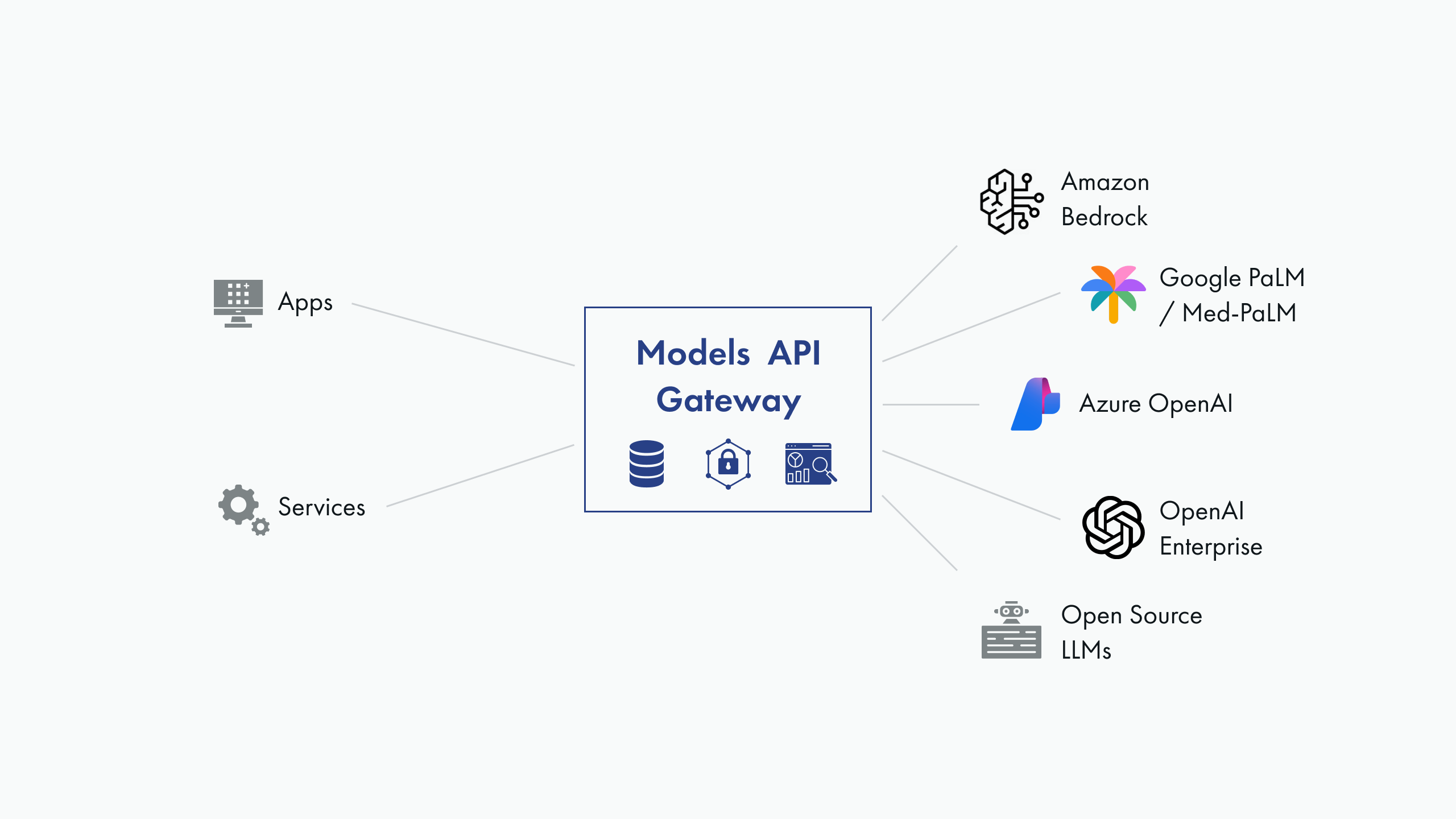

At the heart of DataArt's response to these challenges is the AI platform, a comprehensive solution designed to streamline the deployment and management of LLM applications.

The platform's cornerstone is a “gateway” – a key component that proxies access to the actual provider’s APIs. The platform's core features include:

- Conceals actual API keys from end-users.

- Issues its own API keys, offering us complete control.

- Integrates OAuth2 authentication with our Azure infrastructure.

- Accounts for requests on both a per-key and per-user basis.

- Sets access quotas for individual users or groups.

- Collects access statistics.

Originally implemented with a combination of a reverse proxy nginx with Lua plugins and a custom Python/FastAPI service, it counts requests, sets limits, and collects usage data. Currently, it supports the proxying of OpenAI and Azure OpenAI APIs, with plans to incorporate Amazon Bedrock/Claudie, Google Gemini, and prominent open-source model deployments.

Another pivotal platform component is the LLM apps marketplace (which we call the AI portal). It grants centralized access to deployments of LLM applications, such as:

- In-House ChatGPT-like Web Application: Features a shared company-wide and department-specific prompt library, and an advanced prompting mode similar to OpenAI's playground for prompt engineering, experimentation, and application development.

- Speech-to-Text Tool: Based on the open-source Whisper model, utilized for audio and video transcriptions.

- Prototype Applications and Proofs of Concept: Serve as starter kits and help in marketing our services to prospective clients, enhancing our enterprise AI solutions.

The AI platform has notably streamlined our Generative AI research and development activities, allowing us to build a portfolio of demonstration applications and internal tools that boost employee productivity in a responsible and compliant manner.

Seamless AI/LLM Integration is Just a Click Away. Discover How!

Don't let complexity hold you back. If your business is considering the leap into Al integration or looking to enhance its current Al infrastructure with a custom-developed platform, DataArt Al Lab is ready to bring your vision to life.

Contact Us →

Best Practices for AI Integration and Leveraging AI Platforms

- Clearly Define Your Objectives

Before diving into AI platform integration, it's crucial to have a clear understanding of your business objectives. Define what you aim to achieve by integrating LLM, whether it is improving customer experience, enhancing productivity, or driving innovation with enterprise AI solutions. Clear goals will help shape your enterprise AI strategy and ensure successful implementation.

- Prioritize Security and Compliance

Security and compliance should be top priorities as you integrate the AI platform into your business operations. Ensure the platform adheres to industry standards and regulations and implement strong data protection measures, including encryption, access controls, and regular security audits. Additionally, consider the geographical location of data centers concerning data sovereignty laws.

- Foster a Culture of Experimentation in Your AI Implementation Strategy

AI platforms offer a powerful opportunity to experiment with new technologies and approaches. Encourage a culture of experimentation within your organization, allowing teams to explore different uses of LLM and SaaS APIs. This can lead to innovative solutions and applications that drive your business forward.

- Implement Effective Access Management in Your Enterprise AI Strategy

Access management is critical when working with AI platforms and APIs. Implement a robust system for managing API keys and user access, ensuring that only authorized personnel can access sensitive functionalities.

- Monitor Usage and Performance

To optimize the benefits of the AI platform, continuously monitor its usage and performance. This includes tracking API calls, response times, and resource consumption. Use these insights to adjust your integration strategy, allocate resources more effectively, and identify areas for improvement.

- Plan for Scalability

As your business grows, so will your AI needs. Choose an AI platform that can scale with your business, supporting future growth without requiring a complete overhaul of your current infrastructure.

- Continuously Evaluate and Adapt Your Strategy

AI is constantly evolving. Stay informed about the latest developments and be ready to adapt your AI implementation strategy as needed. This may involve experimenting with new LLMs, adjusting your security practices, or exploring new AI use cases within your business.

- Measure the Impact and ROI of Your AI Integration

Finally, to justify the investment, it's essential to measure the impact of your AI integration efforts. Establish metrics that reflect your business goals, such as improvements in efficiency, customer satisfaction, or innovation. Track these metrics over time to assess the return on investment (ROI) of over time and make data-driven decisions about future technology investments.

Conclusion

Incorporating AI into your enterprise is no longer an option — it's a strategic imperative. By implementing a well-defined AI integration strategy, prioritizing security, and fostering a culture of innovation, businesses can unlock the full potential of AI. The right enterprise AI solutions will not only streamline processes but also position your business at the cutting edge of technological advancement, delivering measurable success in the long run.