Introduction

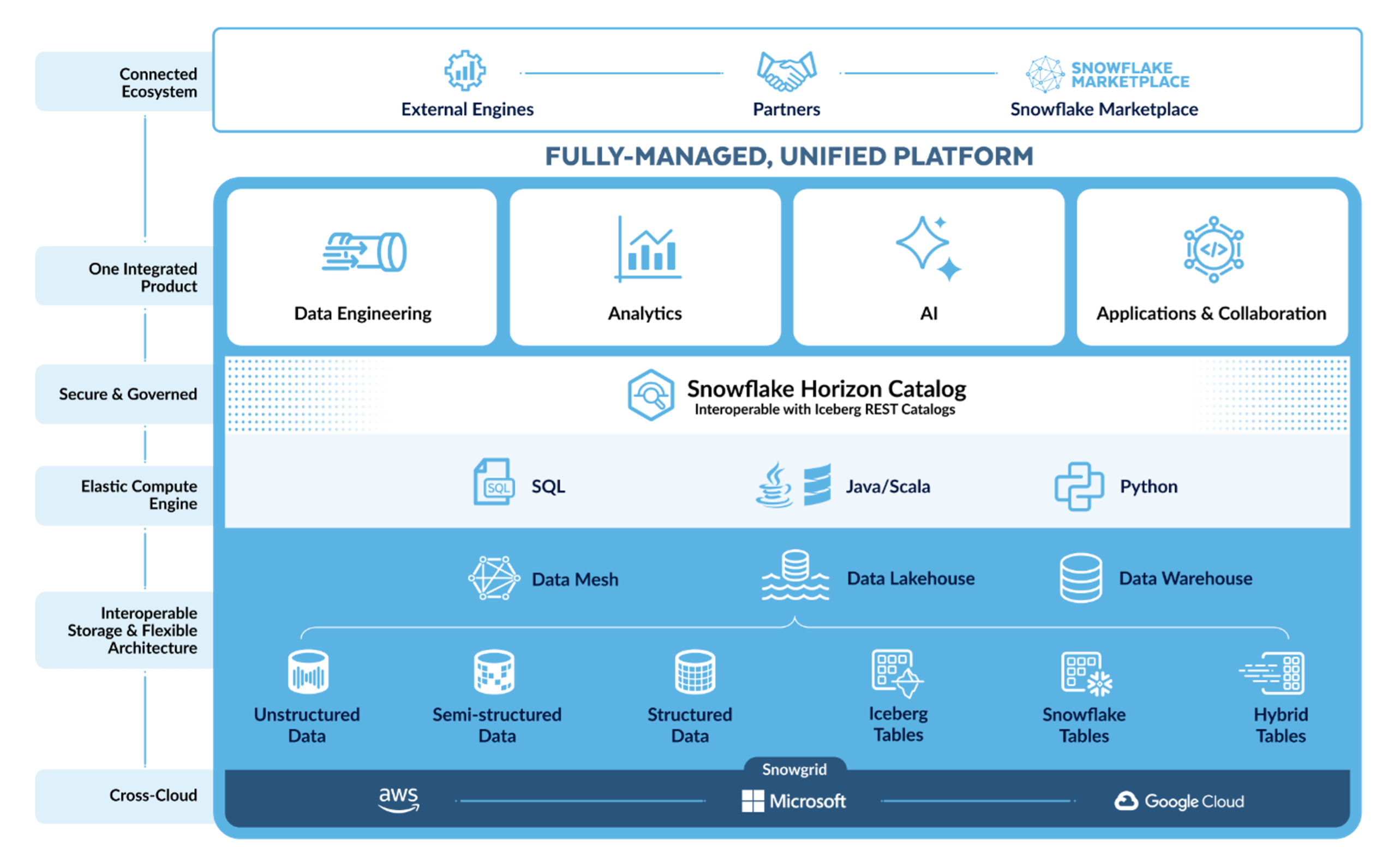

Snowflake recently rolled out a wave of new features, with more updates on the way. This article highlights the most impactful updates from the author's perspective and describes their practical benefits. New data ingestion, transformation, and consumption capabilities streamline operations for data owners and consumers. Additional enhancements support long text and media analysis, simplify development and infrastructure management, strengthen security and data governance, and improve compute cost efficiency.

New Snowflake Features for Data Engineering and BI

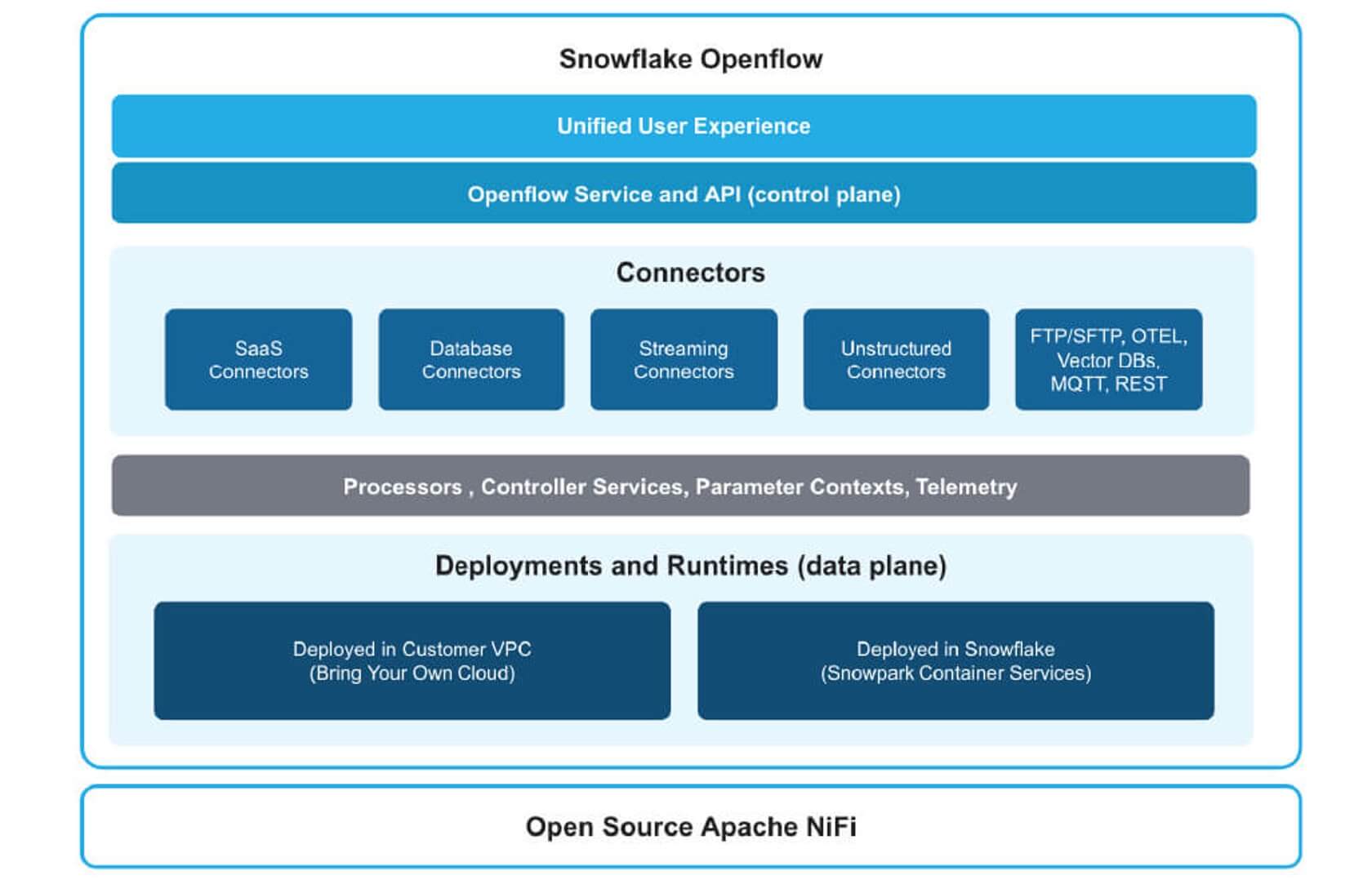

OpenFlow: A New Approach to Data Integration

For data ingestion and reverse ETL, OpenFlow brings Apache NiFi’s integration capabilities directly into Snowflake, supporting structured, semi-structured, and unstructured data using streaming, batch, and micro-batch approaches, with minimal development effort and qualification requirements. NiFis' open-source nature and the number of existing components mean it should become a strong competitor to Fivetran and Matillion from the first day.

Additionally, a flexible pricing model based on resource usage, along with a small cost per hour of usage and the ability to bring your cloud infrastructure, makes it a real game-changer. Together with Apache Iceberg and bring-your-own compute, Snowflake becomes a highly flexible and cost-efficient service for all company data or an open component to be integrated into the company data surface.

There is significant potential for leveraging OpenFlow AI to construct flexible data pipelines, facilitating data evolution, and enabling the creation of pipelines using natural language. This service allows transfer of ownership of data pipelines to product teams without requiring a steep learning curve, and can serve as an adaptive solution for data ingestion. It also reduces the time and cost of development during the initial phase of growth and maintenance, thanks to its simplicity and all-in-one data ingestion architecture.

Deployment models include fully managed options in Snowflake infrastructure (not yet available), Bring Your Own Cloud (BYOC) option where Snowflake manages the setup but the customer owns the infrastructure, and finally, a Bring Your Virtual Private Cloud (BYO-VPC) option that should serve companies with centralized architecture management and specific security requirements. That can also reduce costs directly applied to Snowflake and shift them to the cloud budget. Some possible disadvantages include the necessity of learning a new service written in Java for Data Engineers who need to add a new connector. However, it is possible to implement most of the functions using Python.

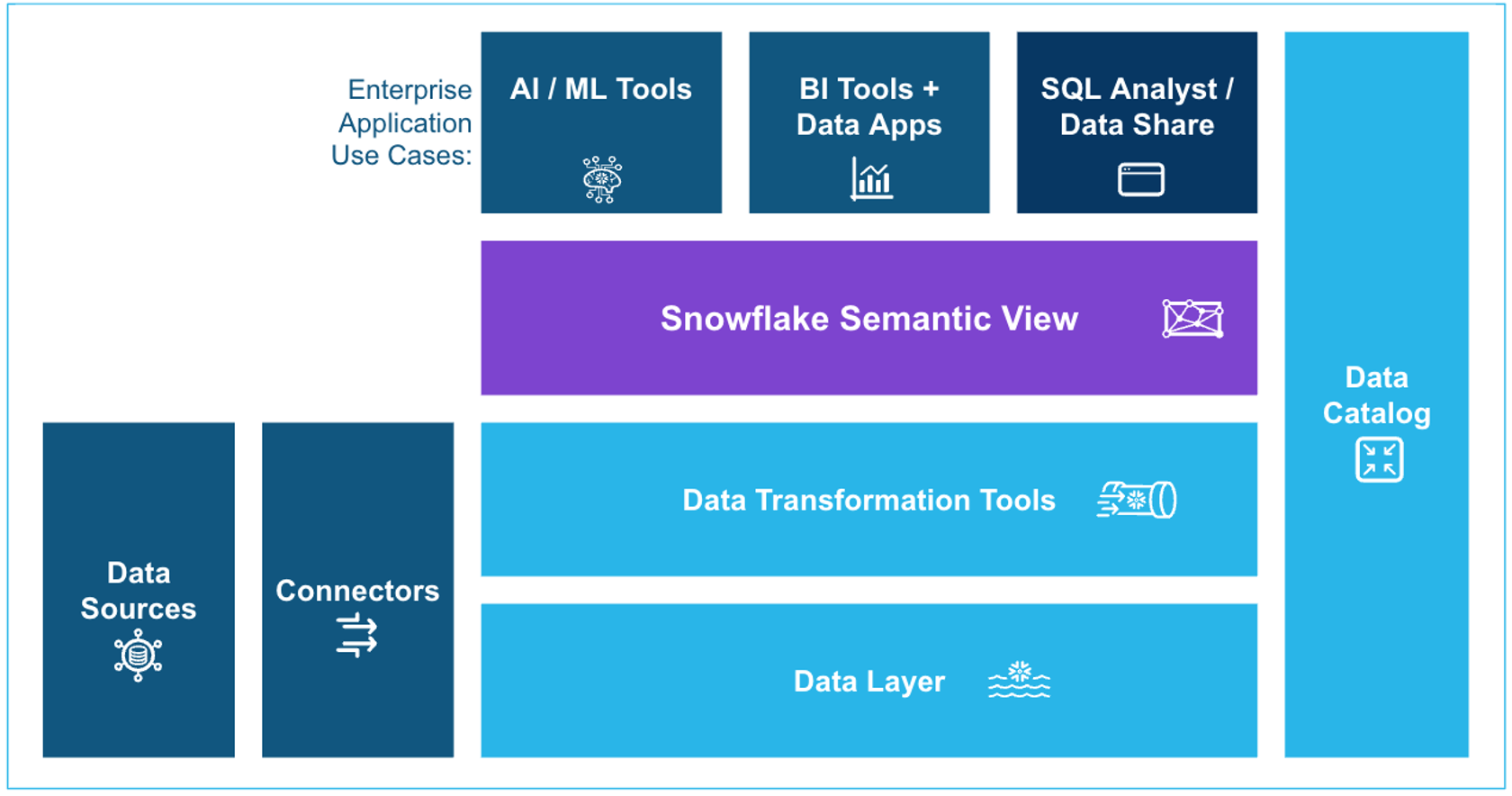

Semantic Views: Building Trust in AI Analytics

Snowflake Semantic Views. It is a new shape of the semantic layer and looks way more convenient than prior YAML semantic models. Although compatible, I prefer semantic views as they are more structured and convenient. The necessity of a semantic model is described by the need to explain your data for AI and BI tools. Suppose you skip building a semantic layer in your data solution. In that case, you will significantly increase the risk of unreliable responses from AI analytics, and you will also need to provide information about your data for business intelligence (BI) tools.

I believe that semantic layers will become an essential component of your data. Fortunately, built-in LLMs enable the generation of exceptionally high-quality semantic model definitions that require minimal adjustments. Together with verified queries, semantic views improve the quality of your AI response, including “talk to your data” capabilities. That is an enabler for data democratization in both AI analytics and traditional business intelligence (BI). The role of semantic models, together with verified queries, is essential for building trustworthy AI analytics.

Snowflake Semantic Views provide a more structured and convenient approach to semantic modeling compared to earlier YAML-based models, while maintaining full compatibility. Semantic layers are essential for making data understandable to both AI and BI tools; without one, you risk unreliable AI responses and must manually define data context for BI systems. Built-in LLMs now help generate high-quality semantic definitions with minimal tweaks, making it easier to create and maintain these layers. Combined with verified queries, Semantic Views enhance AI-driven “talk to your data” capabilities and improve the reliability of both AI analytics and traditional BI. As such, semantic layers are becoming a critical foundation for data democratization and trustworthy analytics.

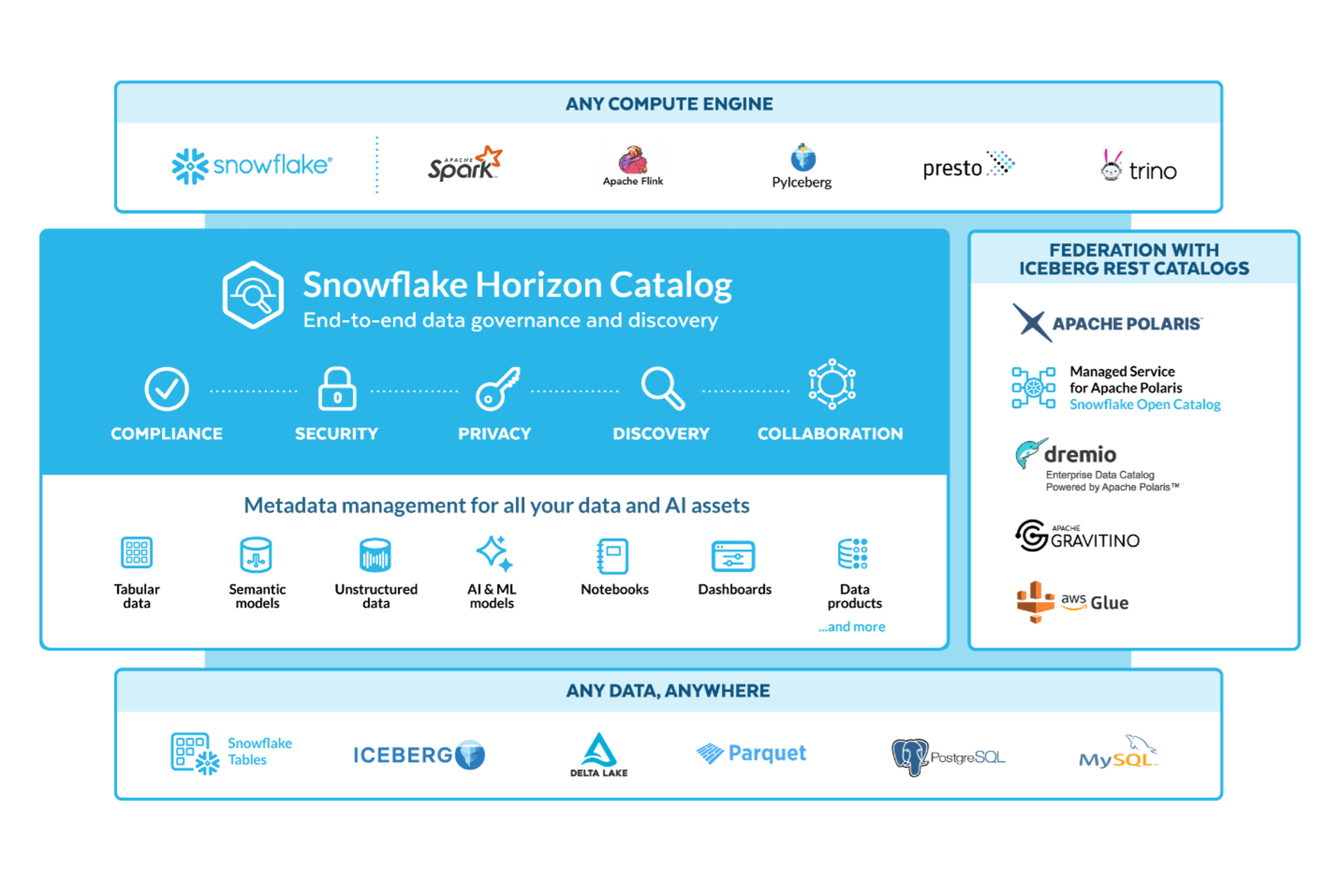

Snowflake Horizon: Security Meets Discovery

Improvements to Snowflake Horizon enable synchronization with other catalogs, discovery of external databases without leaving Snowflake, and the ability to answer questions related to governance and security, furthering a democratic data environment and enhancing interoperability. AI-powered data classification and vulnerability detection strengthen the security of your data. As far as modern data services tend to give away storage it is becoming essential to menage metadata level to be able to have a sight and use data stored outside of the platform, in external or shared, like Iceberg, storages I think Snowflake will make more efforts towards governance and observability of different storage types and services.

PostgreSQL Integration: Unified Analytics and Transactions

The built-in PostgreSQL database, along with hybrid tables, enables real-time analytics on transactional databases, signaling a return to integrated architectures where application and analytics data workloads coexist on a single platform. While it may not become the default approach for all use cases in the near term, it offers a viable alternative to complex data infrastructures and ingestion pipelines, especially for smaller companies and new projects. Looking ahead, this setup also supports advanced use cases, such as AI-driven transactions, rapid outlier detection, and in-system issue resolution through AI agents.

Developer Experience Improvements

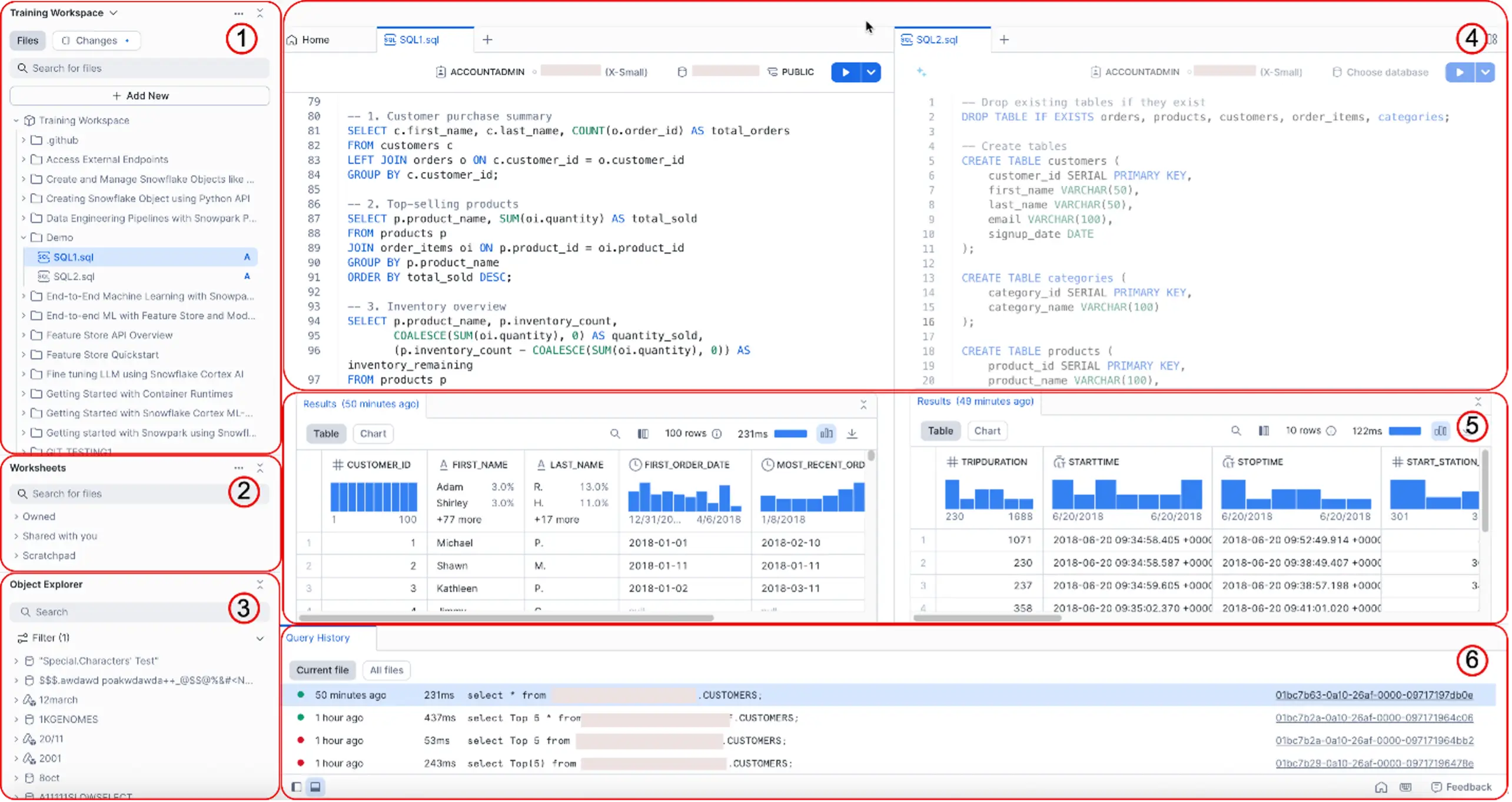

Snowsight Workspaces

The new development environment consolidates tools within Snowflake's UI, potentially reducing the need for external IDEs and multiple extensions. Integration with Snowflake Co-pilot aims to accelerate development.

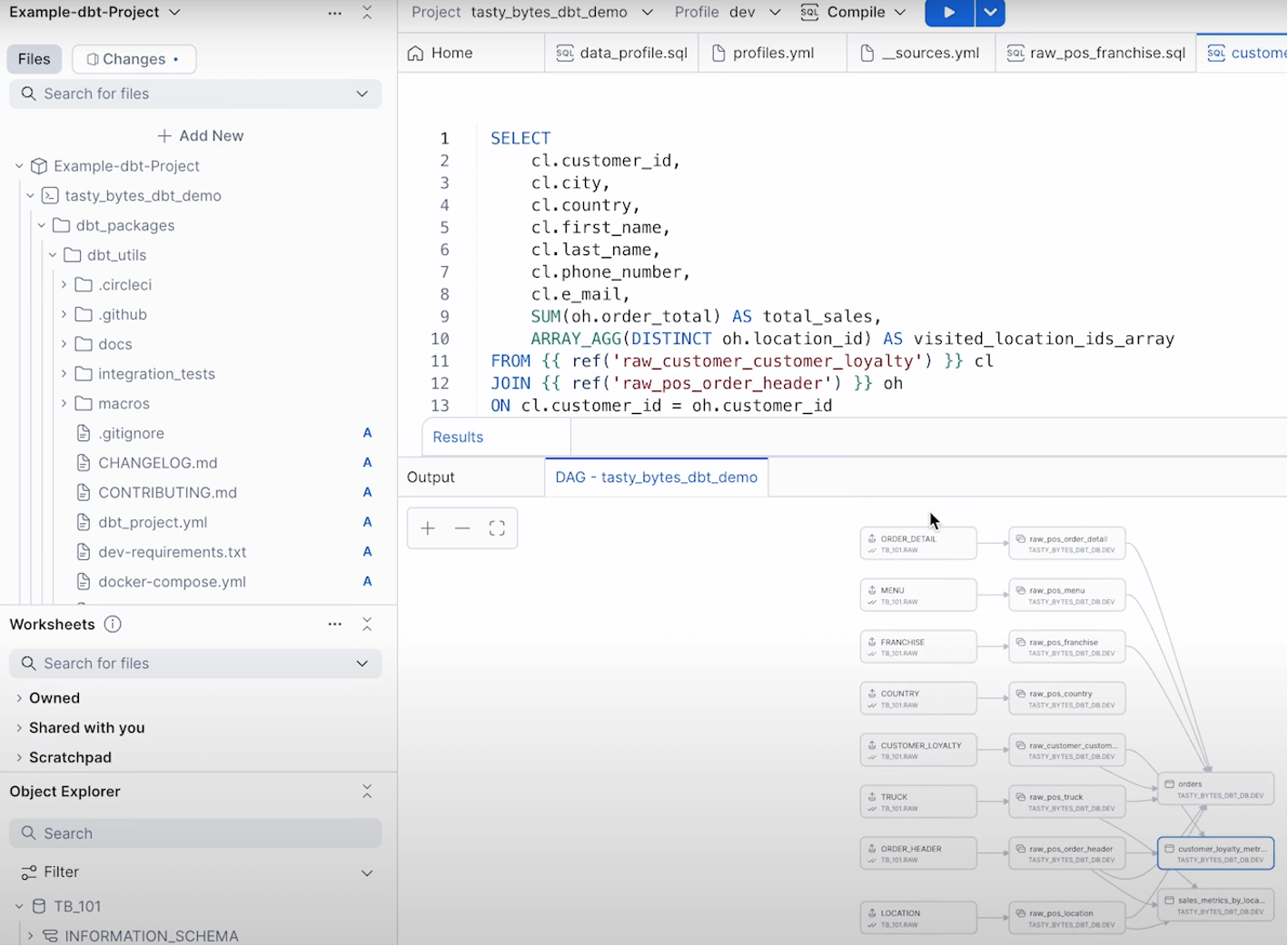

Native DBT Support

Direct DBT functionality in the Snowflake UI eliminates the need for a complex setup, which could lead to additional integrations with tools like SQLMesh and Coalesce.

Container Services

Snowflake now supports deploying containerized Services and Jobs directly from a built-in container registry, enabling custom code execution, extensions to Snowflake functions, and job processing using Snowflake compute pools. This expands the existing Streamlit and native apps, simplifies infrastructure setup, and could eliminate the need for many external components surrounding Snowflake.

Adaptive warehouses help optimize credit usage at a more granular level, while Snowflake Generation 2 standard warehouses, currently available in selected regions, offer further control over costs for analytics workflows. Additionally, improved performance when accessing Iceberg tables supports broader adoption of open table formats and smoother migration to open standards.

AI for Data: Expanding Snowflake’s Reach

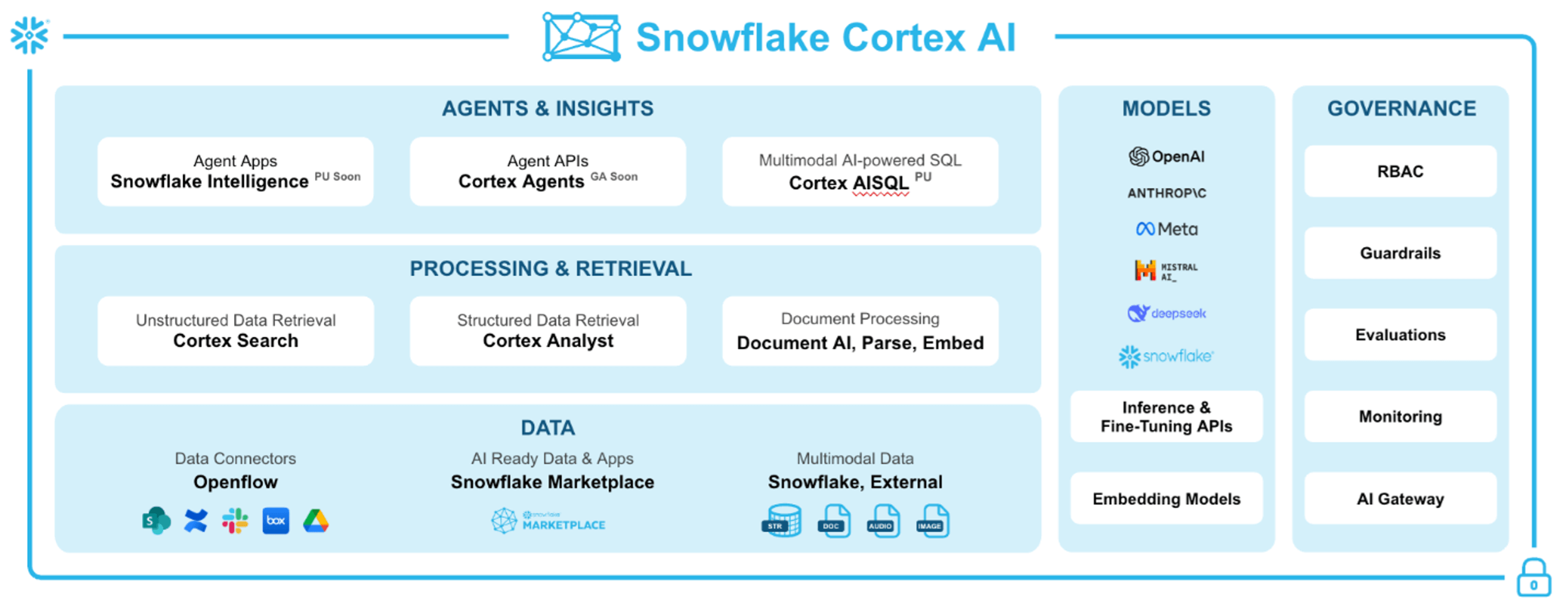

Areas for enhancement include data democratization, which involves making data more accessible to individuals unfamiliar with analytics tools, as well as introducing new features and AI tools to enhance performance for data professionals. Most of the features listed below can serve both purposes, although Cortex Analysts is more oriented to new users.

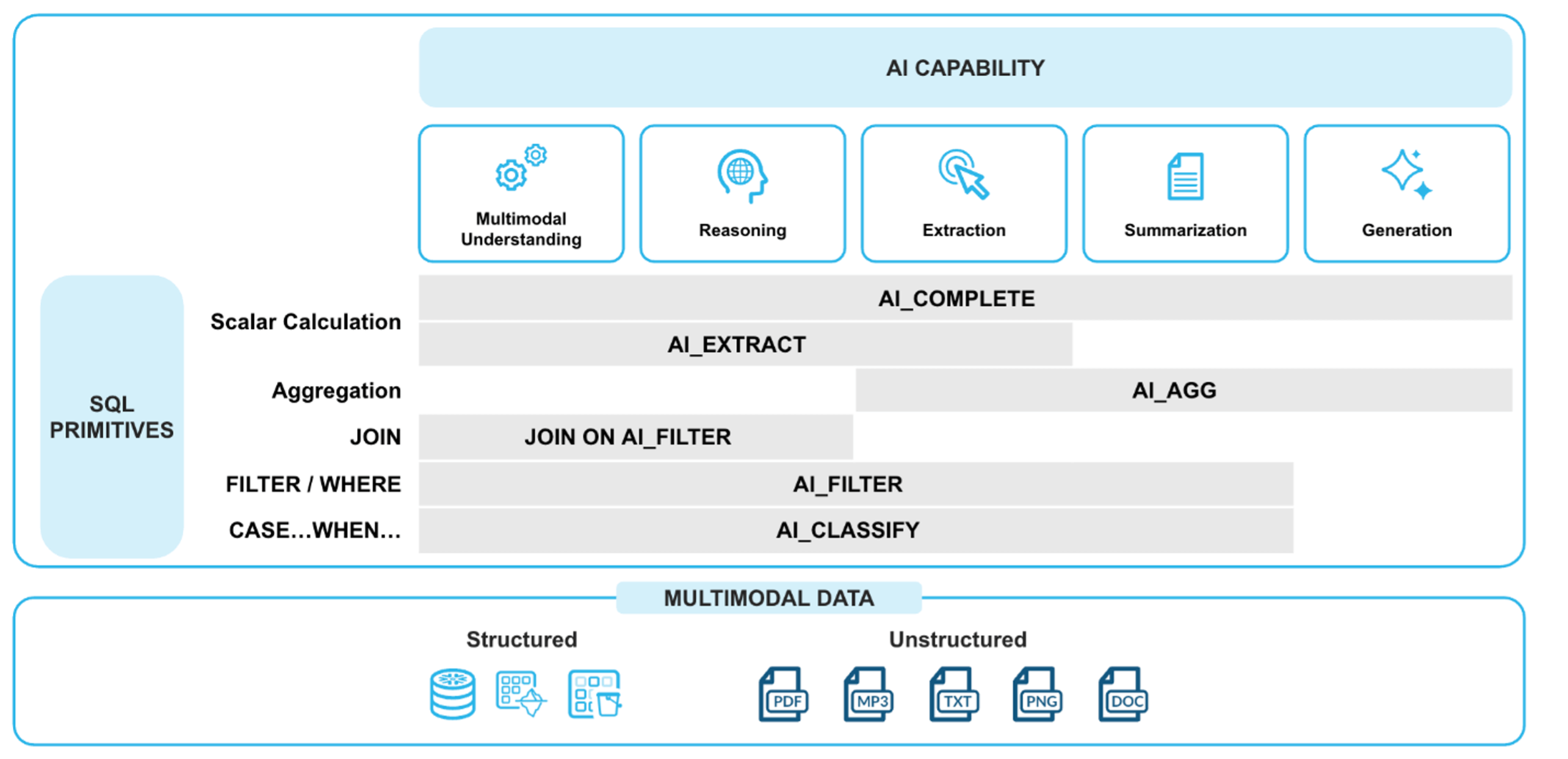

Cortex AI SQL extends traditional SQL by enabling queries on unstructured data such as text and images, without requiring Python or deep AI knowledge. Data professionals can now classify, filter, aggregate, compare, and join across structured and unstructured sources using standard SQL, significantly simplifying tasks that previously required custom development. This shift marks a step toward establishing an AI-enhanced version of SQL as a new industry standard.

Analytics, text, media, and aggregate insights can be seamlessly included in the analysis, significantly reducing efforts in unstructured data analytics. In the future, AI-powered SQL can become the basis for a new industry standard for the SQL language.

Snowflake continues to enhance its AI capabilities to support both data professionals and less technical users. Key areas of improvement include data democratization—making analytics more accessible to non-experts—and expanding AI-driven features that boost productivity for experienced users. While most of these updates serve both audiences, tools like Cortex Analyst are specifically designed for new users.

Cortex Knowledge Extensions enable proprietary AI providers to support retrieval-augmented generation (RAG) without exposing all underlying content. These extensions enable AI models to run securely within a customer’s environment, providing safe and context-rich insights from private data without compromising control or visibility.

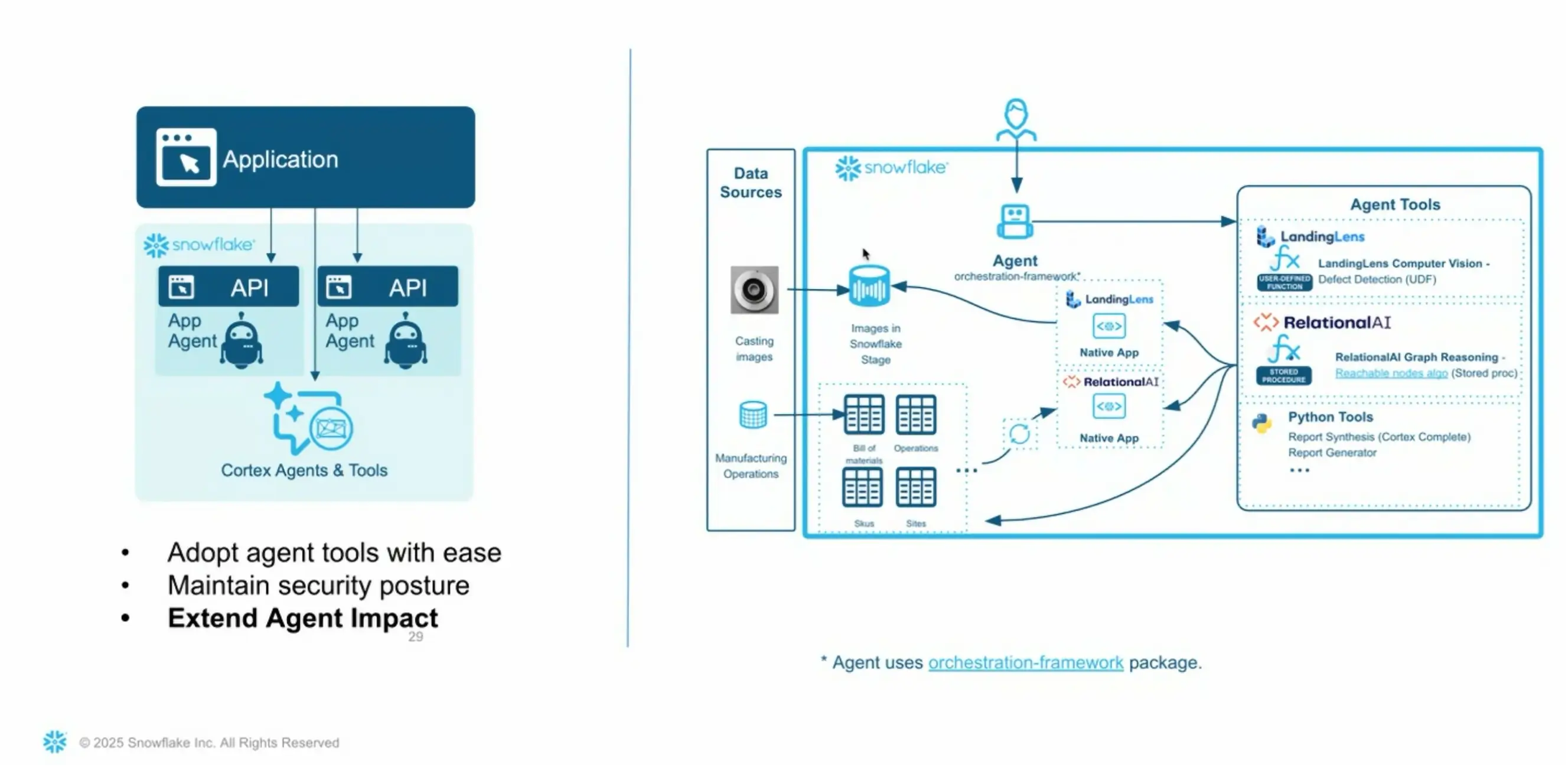

To coordinate AI interactions, Snowflake introduced an in-built AI Agent. This system routes user prompts to the appropriate AI model, retains context, and manages multistep interactions across services. Acting as a bridge between users, models, and knowledge bases is a foundational layer for more advanced AI interfaces, such as enterprise chatbots or agentic systems.

Building on that foundation, Agentic Applications will be in the Snowflake preview soon. These will allow companies to deploy custom AI models trained to generate responses for different use cases. Snowflake also announced upcoming MCP servers and inter-agent communication, which will enable multistep tasks involving coordination between multiple AI agents, further expanding the scope of intelligent automation and chatbot capabilities.

Several long-standing features remain relevant alongside these innovations. Document AI allows for extracting content from unstructured documents, including text, logos, and handwriting.

While already available, Cortex Search gains new utility with the rise of agentic AI and Cortex AI SQL, particularly in use cases like applying taxonomies for business term recognition and automated classification.

Finally, Cortex Analyst—the core “talk-to-your-data” feature—has seen significant improvements with the integration of semantic views. It now offers high-accuracy results and intuitive usability, making it a strong candidate for chatbot integration or as a foundation for custom analytics UIs.

Looking Ahead

I believe Snowflake is well-positioned to meet most of an enterprise's data operation needs. Although Snowflake has implemented its task functionality, I still see room for improvement, particularly in support for orchestrators like Apache Airflow or Dagster, as well as stronger deployment automation capabilities. While Snowflake has done a great job addressing the core needs of corporate data teams, I have not seen seamless integration of BI tools beyond Power BI, nor features that fully leverage existing data capabilities across platforms. Some features, such as the Generation 2 standard warehouse, are still limited to specific regions, and OpenFlow is currently available only on the AWS cloud.

I also think simple data visualization for talk-to-your-data functionality would benefit from simpler, no-code visualizations, ideally using a declarative approach. I am keen to see broader support for tools like DBT projects within Snowsight workspaces. Despite these gaps, I think Snowflake has made a tremendous impact in reshaping the data landscape and enabling both AI transformation and data democratization. It’s worth noting that not all features mentioned here are currently available, even as a preview. I expect it will take some time before they reach a broader audience in a more refined state.